ILIAS: Instance-Level Image retrieval At Scale

Giorgos Kordopatis-Zilos Vladan Stojnić Anna Manko Pavel Šuma Nikos Efthymiadis Nikolaos-Antonios Ypsilantis Zakaria Laskar Jiří Matas Ondřej Chum Giorgos Tolias

Visual Recognition Group, Faculty of Electrical Engineering, Czech Technical Univesity in Prague

Dataset intro

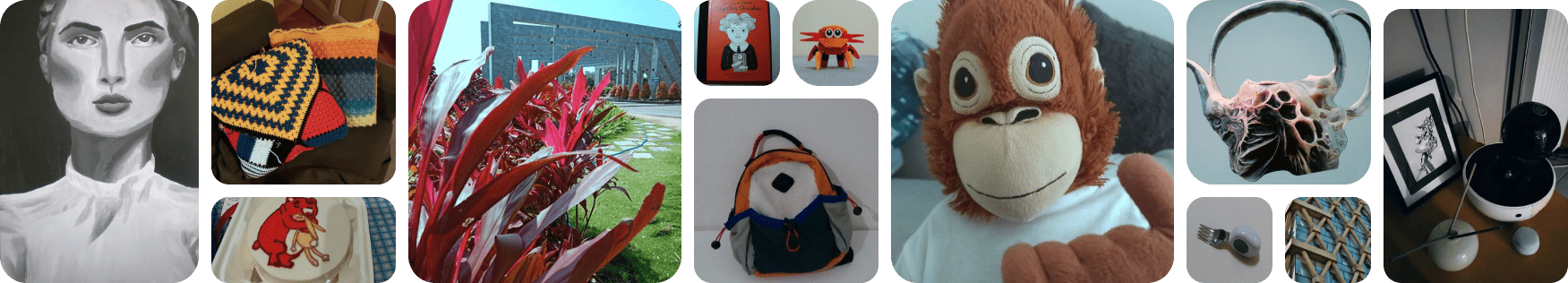

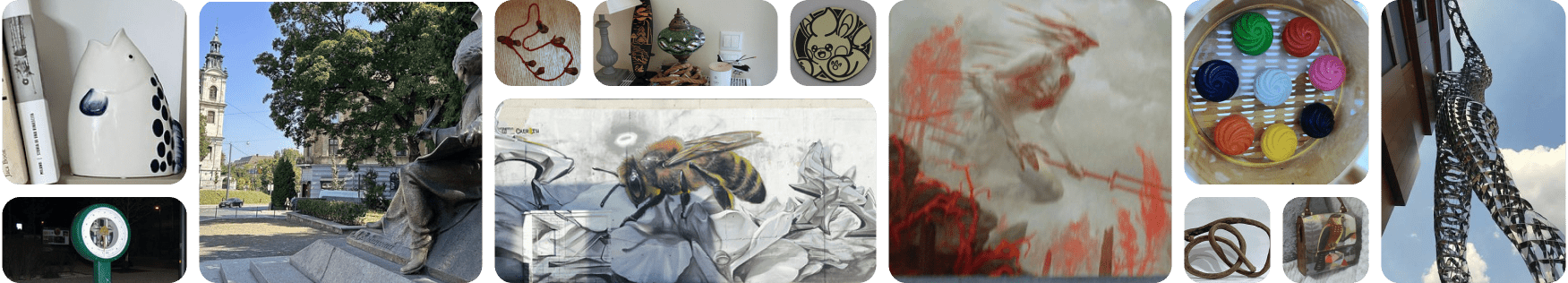

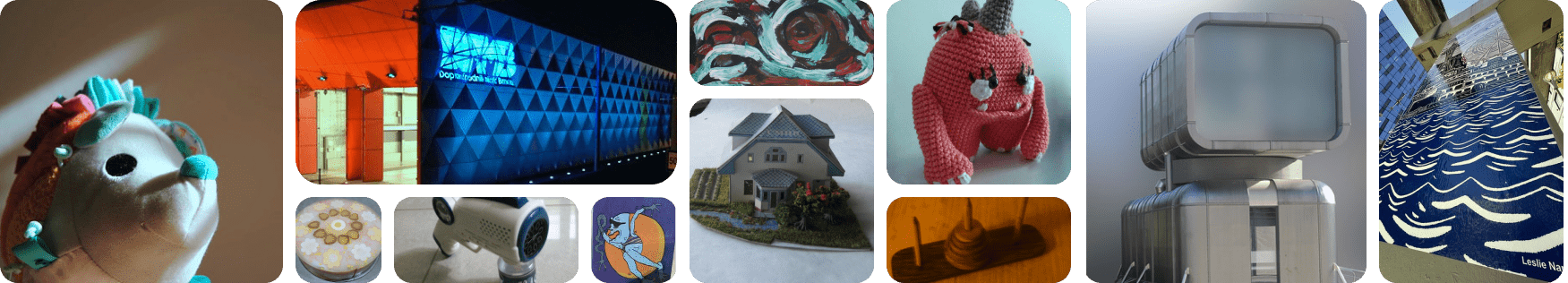

This work introduces ILIAS , a new test dataset for Instance-Level Image retrieval At Scale, designed to support future research in image-to-image and text-to-image retrieval for particular objects, and additionally serves as a large-scale benchmark for evaluating representations of foundation vision and language models (VLM). ILIAS includes queries and positive images for 1,000 object instances, covering diverse conditions and domains. Retrieval is done against 100M distractor images from YFCC100M. To avoid FNs, only query objects emerging after 2014, the YFCC100M compilation date, are included.

Key insights from extensive benchmarking:

- models fine-tuned on specific domains, such as landmarks or products, excel in that domain but fail on ILIAS

- learning a linear adaptation layer using multi-domain class supervision results in performance improvements, especially for vision-and-language models

- local descriptors in retrieval re-ranking are still a key ingredient, especially in the presence of severe background clutter

- the text-to-image performance of the vision-language foundation models is surprisingly close to the corresponding image-to-image case.

Instances were manually collected to capture challenging conditions and diverse domains

Large-scale retrieval is conducted against 1M Distractors images from YFCC100M.

To avoid FPs all the objects known to be designed after 2014, the YFCC100M compilation date

SOTA models were evaluated during the dataset collection

Benchmark

Global representation models for Image-to-Image

| checkpoint | year | repo | arch | dims | Dataset | Data Size | Train Res | Test Res | 5M | 100M† | 100M |

|---|---|---|---|---|---|---|---|---|---|---|---|

| alexnet.tv_in1k | 2012 | torchvision | CNN | sup | in1k | 1M | 224 | 384 | 1.9 | 1.3 | 1.5 |

| vgg16.tv_in1k | 2014 | torchvision | CNN | sup | in1k | 1M | 224 | 384 | 2.3 | 1.6 | 2.3 |

| resnet50.tv_in1k | 2015 | torchvision | R50 | sup | in1k | 1M | 224 | 384 | 2.5 | 1.8 | 1.7 |

| resnet101.tv_in1k | 2015 | torchvision | R101 | sup | in1k | 1M | 224 | 384 | 2.7 | 1.8 | 1.9 |

| densenet169.tv_in1k | 2016 | torchvision | CNN | sup | in1k | 1M | 224 | 384 | 2.9 | 2.0 | 2.4 |

| inception_v4.tf_in1k | 2017 | torchvision | CNN | sup | in1k | 1M | 299 | 512 | 1.5 | 1.0 | 1.1 |

| nasnetalarge.tf_in1k | 2018 | torchvision | CNN | sup | in1k | 1M | 331 | 512 | 1.6 | 1.0 | 1.0 |

| tf_efficientnet_b4.ns_jft_in1k | 2019 | timm | CNN | sup+dist | in1k | 1M | 380 | 512 | 4.3 | 2.9 | 2.6 |

| vit_base_patch16_224.augreg_in1k | 2020 | timm | ViT-B | sup | in1k | 1M | 224 | 384 | 1.9 | 1.3 | 1.0 |

| vit_base_patch16_224.augreg_in21k | 2020 | timm | ViT-B | sup | in21k | 14M | 224 | 384 | 6.2 | 4.4 | 3.0 |

| vit_large_patch16_224.augreg_in21k | 2020 | timm | ViT-L | sup | in21k | 14M | 224 | 384 | 7.3 | 5.3 | 4.6 |

| vit_large_patch16_224.augreg_in21k_ft_in1k | 2020 | timm | ViT-L | sup | in1k | 1M | 224 | 384 | 6.6 | 4.7 | 3.6 |

| vit_large_patch16_384.augreg_in21k_ft_in1k | 2020 | timm | ViT-L | sup | in1k | 1M | 384 | 512 | 8.7 | 6.4 | 5.3 |

| deit3_base_patch16_224.fb_in1k | 2021 | timm | ViT-B | sup+dist | in1k | 1M | 224 | 384 | 2.7 | 1.8 | 1.2 |

| deit3_large_patch16_224.fb_in1k | 2021 | timm | ViT-L | sup+dist | in1k | 1M | 224 | 384 | 3.3 | 2.4 | 1.5 |

| RN50.openai | 2021 | github | R50 | vla | opanai | 400M | 224 | 384 | 8.5 | 6.0 | 3.2 |

| vit_base_patch16_clip_224.openai | 2021 | timm | ViT-B | vla | opanai | 400M | 224 | 384 | 10.7 | 7.9 | 4.2 |

| vit_large_patch14_clip_224.openai | 2021 | timm | ViT-L | vla | opanai | 400M | 224 | 384 | 15.8 | 11.9 | 7.0 |

| vit_large_patch14_clip_336.openai | 2021 | timm | ViT-L | vla | opanai | 400M | 336 | 512 | 19.9 | 15.2 | 9.4 |

| vit_large_patch14_clip_224.laion2b | 2021 | timm | ViT-L | vla | laion2b | 2B | 224 | 384 | 17.5 | 13.7 | 9.4 |

| swav_resnet50 | 2021 | github | R50 | ssl | in1k | 1M | 224 | 384 | 2.9 | 2.1 | 1.7 |

| dino_resnet50 | 2021 | github | R50 | ssl | in1k | 1M | 224 | 384 | 4.1 | 2.9 | 2.9 |

| dino_vitb16 | 2021 | github | ViT-B | ssl | in1k | 1M | 224 | 384 | 6.6 | 4.8 | 3.7 |

| moco_v3_resnet50 | 2021 | github | R50 | ssl | in1k | 1M | 224 | 384 | 3.4 | 2.6 | 2.6 |

| moco_v3_vitb | 2021 | github | ViT-B | ssl | in1k | 1M | 224 | 384 | 3.2 | 2.3 | 1.9 |

| convnext_base.fb_in1k | 2022 | timm | CN-B | sup | in1k | 1M | 288 | 384 | 3.9 | 2.7 | 2.0 |

| convnext_base.fb_in22k | 2022 | timm | CN-B | sup | in22k | 14M | 224 | 384 | 9.9 | 7.6 | 6.4 |

| convnext_large.fb_in1k | 2022 | timm | CN-L | sup | in1k | 1M | 288 | 384 | 4.2 | 2.9 | 2.2 |

| convnext_large.fb_in22k | 2022 | timm | CN-L | sup | in22k | 14M | 288 | 384 | 9.1 | 6.9 | 6.6 |

| convnext_base.clip_laion2b_augreg | 2022 | timm | CN-B | vla | laion2b | 2B | 256 | 384 | 18.1 | 14.0 | 7.9 |

| convnext_large_mlp.clip_laion2b_ft_soup_320 | 2022 | timm | CN-L | vla | laion2b | 2B | 320 | 512 | 22.9 | 18.3 | 9.6 |

| recall_512-resnet50 | 2022 | github$^* | R50 | sup | sop | 60k | 224 | 384 | 3.1 | 2.1 | 1.6 |

| recall_512-vit_base_patch16_224_in21k | 2022 | github$^* | ViT-B | sup | sop | 60k | 224 | 384 | 7.3 | 5.3 | 5.0 |

| cvnet_resnet50 | 2022 | github | R50 | sup | gldv2 | 1M | 512 | 724 | 3.5 | 2.6 | 2.9 |

| cvnet_resnet101 | 2022 | github | R101 | sup | gldv2 | 1M | 512 | 724 | 4.2 | 3.1 | 3.0 |

| superglobal_resnet50 | 2023 | github | R50 | sup | in1k | 1M | 224 | 384 | 3.0 | 2.4 | 2.0 |

| superglobal_resnet101 | 2023 | github | R101 | sup | gldv2 | 1M | 512 | 724 | 4.5 | 3.2 | 3.4 |

| hier_dino_vits16_sop | 2023 | github$^* | ViT-S | sup | sop | 60k | 224 | 384 | 5.1 | 3.6 | 3.3 |

| eva02_base_patch14_224.mim_in22k | 2023 | timm | ViT-B | ssl | in22k | 14M | 224 | 384 | 4.7 | 3.2 | 2.1 |

| eva02_large_patch14_224.mim_in22k | 2023 | timm | ViT-L | ssl | in22k | 14M | 224 | 384 | 3.9 | 2.7 | 1.5 |

| eva02_large_patch14_224.mim_m38m | 2023 | timm | ViT-L | ssl | merged38m | 38M | 224 | 384 | 8.8 | 6.1 | 4.7 |

| eva02_base_patch16_clip_224.merged2b | 2023 | timm | ViT-B | vla | merged2b | 2B | 224 | 384 | 11.7 | 8.7 | 5.9 |

| eva02_large_patch14_clip_336.merged2b | 2023 | timm | ViT-L | vla | merged2b | 2B | 336 | 512 | 20.9 | 16.0 | 10.9 |

| unicom_vit_base_patch16_224 | 2023 | github | ViT-B | dist | laion400m | 400M | 224 | 384 | 13.8 | 11.1 | 11.0 |

| unicom_vit_large_patch14_224 | 2023 | github | ViT-L | dist | laion400m | 400M | 224 | 384 | 17.7 | 13.8 | 13.8 |

| unicom_vit_large_patch14_336 | 2023 | github | ViT-L | dist | laion400m | 400M | 336 | 512 | 18.6 | 14.6 | 13.9 |

| unicom_vit_base_patch16_gldv2 | 2023 | github$^* | ViT-B | sup | gldv2 | 400M | 512 | 724 | 4.1 | 3.3 | 3.0 |

| unicom_vit_base_patch16_sop | 2023 | github$^* | ViT-B | sup | sop | 400M | 224 | 384 | 12.8 | 9.9 | 9.1 |

| uscrr_64-vit_base_patch16_clip_224.openai | 2023 | github | ViT-B | sup | uned | 2.8M | 224 | 724 | 6.4 | 4.3 | 3.8 |

| dinov2_vitb14 | 2023 | github | ViT-B | ssl | lvd142m | 142M | 518 | 724 | 15.0 | 12.1 | 11.5 |

| dinov2_vitl14 | 2023 | github | ViT-L | ssl | lvd142m | 142M | 518 | 724 | 18.8 | 15.3 | 15.3 |

| vit_base_patch16_siglip_224.webli | 2023 | timm | ViT-B | vla | webli | 10B | 224 | 384 | 19.4 | 15.7 | 11.2 |

| vit_base_patch16_siglip_256.webli | 2023 | timm | ViT-B | vla | webli | 10B | 256 | 384 | 20.6 | 16.7 | 11.5 |

| vit_base_patch16_siglip_384.webli | 2023 | timm | ViT-B | vla | webli | 10B | 384 | 512 | 26.2 | 21.5 | 15.6 |

| vit_base_patch16_siglip_512.webli | 2023 | timm | ViT-B | vla | webli | 10B | 512 | 724 | 27.5 | 23.0 | 16.6 |

| vit_large_patch16_siglip_256.webli | 2023 | timm | ViT-L | vla | webli | 10B | 256 | 384 | 26.3 | 21.8 | 15.2 |

| vit_large_patch16_siglip_384.webli | 2023 | timm | ViT-L | vla | webli | 10B | 384 | 512 | 34.3 | 28.9 | 19.6 |

| vit_base_patch16_clip_224.metaclip_2pt5b | 2024 | timm | ViT-B | vla | 2pt5b | 2.5B | 224 | 384 | 12.7 | 9.4 | 6.6 |

| vit_large_patch14_clip_224.metaclip_2pt5b | 2024 | timm | ViT-L | vla | 2pt5b | 2.5B | 224 | 384 | 21.7 | 16.9 | 11.7 |

| dinov2_vitb14_reg | 2024 | github | ViT-B | ssl | lvd142m | 142M | 518 | 724 | 13.5 | 10.7 | 9.4 |

| dinov2_vitl14_reg | 2024 | github | ViT-L | ssl | lvd142m | 142M | 518 | 724 | 17.1 | 13.6 | 12.7 |

| unic_l | 2024 | github | ViT-L | dist | in1k | 1M | 518 | 512 | 15.3 | 11.7 | 8.9 |

| udon_64-vitb_in21k_ft_in1k | 2024 | github$^* | ViT-B | sup | uned | 2.8M | 224 | 384 | 7.3 | 5.3 | 5.5 |

| udon_64-vitb_clip_openai | 2024 | github$^* | ViT-B | sup | uned | 2.8M | 224 | 384 | 9.2 | 6.7 | 5.9 |

Global representation models for Text-to-Image

| checkpoint | year | repo | arch | dims | Dataset | Data Size | Train Res | Test Res | 5M | 100M |

|---|---|---|---|---|---|---|---|---|---|---|

| RN50.openai | 2021 | oc | R50 | 1024 | opanai | 400M | 224 | 384 | 2.2 | 1.4 |

| vit_base_patch16_clip_224.openai | 2021 | timm+oc | ViT-B | 512 | opanai | 400M | 224 | 384 | 2.8 | 1.6 |

| vit_large_patch14_clip_224.openai | 2021 | timm+oc | ViT-L | 768 | opanai | 400M | 224 | 384 | 6.6 | 4.5 |

| vit_large_patch14_clip_336.openai | 2021 | timm+oc | ViT-L | 768 | opanai | 400M | 336 | 512 | 8.2 | 5.7 |

| vit_large_patch14_clip_224.laion2b | 2021 | iww+21 | timm+oc | ViT-L | 768 | laion2b | 2B | 224 | 384 | 8.8 |

| convnext_base.clip_laion2b_augreg | 2022 | convnext | timm+oc | CN-B | 640 | laion2b | 2B | 256 | 384 | 6.6 |

| convnext_large_mlp.clip_laion2b_ft_soup_320 | 2022 | convnext | timm+oc | CN-L | 768 | laion2b | 2B | 320 | 512 | 10.9 |

| eva02_base_patch16_clip_224.merged2b | 2023 | evaclip | timm+oc | ViT-B | 512 | merged2b | 2B | 224 | 384 | 4.4 |

| eva02_large_patch14_clip_336.merged2b | 2023 | evaclip | timm+oc | ViT-L | 768 | merged2b | 2B | 336 | 512 | 10.0 |

| vit_base_patch16_siglip_224.webli | 2023 | timm+hf | ViT-B | 768 | webli | 10B | 224 | 384 | 9.5 | 6.7 |

| vit_base_patch16_siglip_256.webli | 2023 | timm+hf | ViT-B | 768 | webli | 10B | 224 | 384 | 9.7 | 7.0 |

| vit_base_patch16_siglip_384.webli | 2023 | timm+hf | ViT-B | 768 | webli | 10B | 384 | 512 | 13.6 | 10.4 |

| vit_base_patch16_siglip_512.webli | 2023 | timm+hf | ViT-B | 768 | webli | 10B | 512 | 724 | 13.7 | 10.3 |

| vit_large_patch16_siglip_256.webli | 2023 | timm+hf | ViT-L | 1024 | webli | 10B | 256 | 384 | 15.6 | 12.2 |

| vit_large_patch16_siglip_384.webli | 2023 | timm+hf | ViT-L | 1024 | webli | 10B | 384 | 512 | 20.9 | 17.0 |

| vit_base_patch16_clip_224.metaclip_2pt5b | 2024 | timm+oc | ViT-B | 768 | 2pt5b | 2.5B | 224 | 384 | 7.0 | 4.5 |

| vit_large_patch14_clip_224.metaclip_2pt5b | 2024 | timm+oc | ViT-L | 1024 | 2pt5b | 2.5B | 224 | 384 | 11.8 | 8.3 |

Local representation models for Image-to-Image with Reranking

| checkpoint | year | repo | arch | dims | Dataset | Data Size | Train Res | Test Res | 5M | 100M† | 100M |

|---|

Explore the collected data for your instance-level research!

Discover ILIASGet in touch

Citation

If you find our project useful, please consider citing us:

@article{ #coming-soon, title={ILIAS: Instance-Level Image retrieval At Scale}, author={}, journal={#coming-soon}, year={#coming-soon}, }

Results

Sumbit your results here:

If you have any further questions, please don't hesitate to reach out to georgekordopatis.gmail.com

Acknowledgment

We are grateful to everyone who contributed to ILIAS. A special thank you to Larysa Ivashechkina for her invaluable work in data annotation. We also appreciate the efforts and participation of all the external data collectors — Yankun Wu, Noa Garcia, Yannis Kalantidis, Dmytro Mishkin, Tomáš Jelínek, Dimitris Karageorgiou, Markos Zampoglou, Celeste Abreu, Aggeliki Tserota, Christina Tserota, Eleni Karantali, Eva Tsiliakou, Kelly Kordopati, Panagiotis Tassis, Ruslan Rozumnyi.